That is how many times a word appeared in a document. Inside the cell, the value will be the count of the words in each of the document. In this, you will create a table where the rows represent the documents and the columns represent words. Step 2: Clean the corpusĪfter reading the corpus your next step is to clean the corpus like removing punctuation, stopwords e.t.c Step 3: Create a Count Table How TF-IDF works? Steps by Steps Step1: Read the corpusįirst of all, read the corpus. Higher the strength of a word higher the correlation with the word and document. Each value in a cell has the count/value that determines the strength of the words in that particular document. TF-IDF table consists of rows for each document in a corpus. To apply machine learning on the text you will use the method TF-IDF to convert the text as the numeric table representation. But as the text has words, alphabets and other symbols. In machine learning machine inputs numerics only. Nltk.pos_tag(token_list5) TF-IDF (Term Frequency-Inverse Document Frequency) Text Mining nltk.download("averaged_perceptron_tagger") Nltk clean text download#To use the NLTK for pos tagging you have to first download the averaged perceptron tagger using nltk.download(“averaged_perceptron_tagger”). Then you will apply the nltk.pos_tag() method on all the tokens generated like in this example token_list5 variable. Advanced use cases of it are building of a chatbot.

It uses in entity recognization, filtering, and the sentiment analysis. There are various popular use cases or POS tagging. NLTK package classifies the POS tag with a simple abbreviation like NN (Noun), JJ (Adjective), VBP (Verb Singular Present). It means it identifies whether the word is a verb, noun, object e.t.c. It generally used to identify the parts of speech for each word in a corpus. Here I will print the bigrams and trigrams in the given sample text. Print("Total tokens : ", len(token_list5))įor n-gram you have to import the ngrams module from the nltk. Token_list4 = list(filter(lambda token: token not in stopwords.words("english"),token_list3)) Token_list2 = list(filter(lambda token : punkt.PunktToken(token).is_non_punct,token_list)) Token_list = nltk.word_tokenize(raw_text) Nltk clean text full#However, the full code for the previous tutorial is import osįile = open(os.getcwd()+ "/sample.txt","rt")

I will continue the same code that was done in this post. (You, are, a ),(are, a ,good),(a ,good ,person) Then the following is the N- Grams for it. For example consider the text “ You are a good person“. It is bigram if N is 2, trigram if N is 3, four gram if N is 4 and so on. You can say N-Grams as a sequence of items in a given sample of the text.

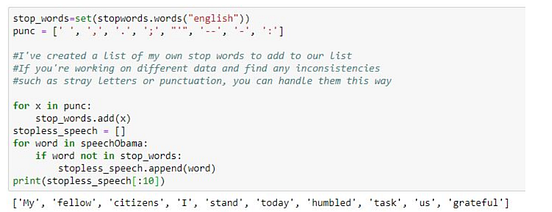

TF-IDF (Term Frequency-Inverse Document Frequency) Text Mining The building of N-Grams In this intuition, you will know about all of these processes. There are also other advanced text processing that helps you to create a meaningful feature for your NLP project. There are various processes for the text preprocessing like removing punctuations, stopwords, tokenization e.t.c that are able to create meaningful text inside the corpus.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed